So my goal for the projected visual was always a “trace” of the tracked users interacting with the piece. I created a quick prototype in After Effects to show motion of a user through the space. I would want the “slices” of motion to be thinner, but I think the render conveys the concept:

What you see at the end of the video is the “ripples” caused by sound captured in the area. More on this later.

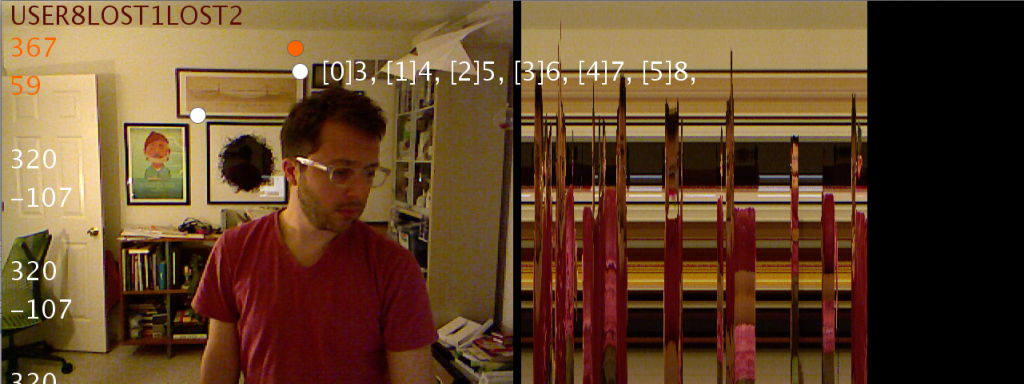

The next step in the process of creating the visuals was to program the motion trace. I always imagined it looking a bit like a slit scan camera photo, except the “source” of the scan would always be focused on the user as he/she moves through space. Also the background would be removed. Well, I got the tracking working, and I was able to draw a visual of what’s captured, but right now it’s not based on the tracked user (though the user is being tracked, as you can see by the dots). And no background removal, yet. But soon. Attached is a quick video recording from my phone (sped up so it’s not as boring):

And here’s a more visible screenshot of the test track output- the visual on the right is what you’d see stretched across the display: